Active Inference

Current AI relies on massive data but struggles with real-world chaos. Enter Active Inference: a physics-based framework where AI acts like a scientist, minimizing surprise through continuous learning. It’s the leap from passive pattern-matching to true agency.

We are living through a period of remarkable acceleration in artificial intelligence. Large Language Models (LLMs) have swallowed the internet, compressing human knowledge into astonishingly capable pattern-matching engines.

Today’s AI systems are incredible at interpolating within their training data, but they remain remarkably brittle when confronted with the messy, unpredictable reality of the physical world.

Imagine driving through a new city at night, in the pouring rain, with faded road markings and blinding reflections. For a human, it’s stressful but manageable. For an autonomous vehicle trained purely on massive datasets, that slight deviation from the norm can cause performance to quietly—and dangerously—fall apart.

The question we must ask is: Why are humans so robust to the chaos of reality, while machines remain so fragile?

The answer lies in a fundamental shift in how we think about intelligence—moving away from passive pattern recognition and toward a physics-grounded framework known as Active Inference.

The Limits of the Black Box

To understand the leap we are about to make, we have to look at the architectural bottleneck of modern machine learning.

Right now, we train models as gigantic statistical engines. They take in vast oceans of data and output guesses based on historical patterns. But their knowledge isn’t organized causally. They don’t have an internal, structured story about how the world works. They are sophisticated autocompletes.

Because of this, they operate as black boxes. When an LLM or an autonomous agent makes an error, we can’t easily peer inside and ask, "What exactly did you believe was happening here, and how certain were you?"

Active Inference, a concept championed by neuroscientist Karl Friston and deeply rooted in physics, flips this paradigm on its head. It suggests that an intelligent system shouldn't just react to inputs. Instead, it should behave like an internal scientist.

The Brain as a Prediction Engine

You and I don’t merely react to the world; we anticipate it. When you’re driving in that rainstorm, you aren't waiting for the car to drift before you correct the steering. You are continuously predicting the friction of the road, the curve of the lane, and the behavior of the pedestrian.

When the world disagrees with your prediction, you experience an error. You then do one of two things: you update your internal model of the world, or you take an action to make reality match your prediction (like hitting the brakes).

Active Inference formalizes this loop. It treats perception, learning, and action not as separate modules bolted together, but as a single, unified system driven by a core principle of physics: the minimization of Variational Free Energy.

Minimizing Surprise in a Chaotic World

In everyday physics, systems tend toward stable states—think of a soap bubble naturally finding the shape that minimizes surface tension. Active Inference applies this thermodynamic logic to intelligence.

In this framework, "free energy" is essentially a measure of surprise. It scores how badly your internal model of the world mismatches the sensory data you are receiving. A system that wants to survive—whether it's a single cell, a human, or an AI agent—cannot afford to be constantly surprised. Too much surprise means you are in a state you don't understand, which is dangerous.

But this doesn’t mean the agent just hides in a dark room to avoid the unpredictable. To minimize surprise in the long term, the system must explore. It must proactively sample the world to reduce its own uncertainty. This elegantly solves one of AI's oldest headaches: the exploration vs. exploitation dilemma. Under Active Inference, curiosity isn’t a bolted-on reward function; it is a fundamental drive.

The Physics of Agency: Markov Blankets

What fascinates me about this approach is how it respects the physical boundaries of systems. In biology, every organism is separated from its environment by a boundary—a cell membrane, or human skin. Active Inference formalizes this as a Markov Blanket.

Your brain never touches the outside world directly. It sits in a dark skull, inferring reality through the noisy signals crossing this boundary. Because access to reality is indirect, the system must rely on a strong internal "generative model" of how the world works.

If we embed these physical constraints—like conservation laws and object permanence—into AI, we drastically reduce the amount of data the system needs to learn. It’s the difference between forcing a neural network to learn gravity from a billion images, and giving it a structural prior that respects the laws of physics. It is exponentially more energy-efficient.

The Synthesis: LLMs meet Active Inference

So, where does this leave the generative AI boom?

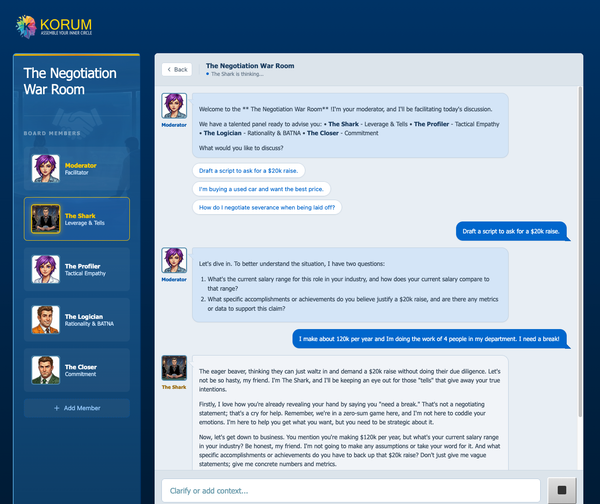

LLMs are brilliant at capturing the broad strokes of human knowledge, but they lack agency. They don’t know how to test a hypothesis in the real world.

The most exciting horizon is a hybrid model. Imagine using an LLM as the "imagination engine" of an agent—generating hypotheses, summarizing context, and proposing plans. But the agent's core decision-making spine is governed by Active Inference. The system would constantly measure, act, update, and—crucially—track its own uncertainty.

Think about the implications for complex systems: managing power grids, optimizing supply chains, or running hospitals. These are dynamic environments full of delayed effects where traditional, backward-looking AI struggles.

We are moving from building machines that merely read the weather report to machines that run the weather station. By grounding artificial intelligence in the rigorous, self-correcting principles of physics, we aren't just building smarter AI. We are building systems that are transparent, efficient, and deeply aligned with the reality of the physical world.

This is the next layer of the exponential transition. Keep an eye on it.